Mark Tilden(1961–) is a biomorphic robot freelance designer from Canada.

A number of his robots are sold as toys.

Others have appeared in television and cinema as props.

Tilden is well-known for his opposition to the notion that

strong artificial intelligence is required for complicated robots.

Tilden is a forerunner in the field of BEAM robotics

(biology, electronics, aesthetics, and mechanics).

To replicate biological neurons, BEAM robots use analog

circuits and systems, as well as continuously varying signals, rather as

digital electronics and microprocessors.

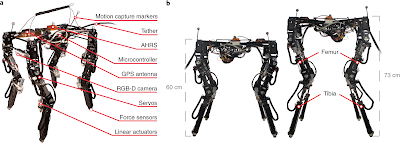

Biomorphic robots are programmed to change their gaits in order

to save energy.

When such robots come into impediments or changes in the

underlying terrain, they are knocked out of their lowest energy condition,

forcing them to adapt to a new walking pattern.

The mechanics of the underlying machine rely heavily on

self-adaptation.

After failing to develop a traditional electronic robot butler in the late 1980s, Tilden resorted to BEAM type robots.

The robot could barely vacuum floors after being programmed

with Isaac Asimov's Three Laws of Robotics.

After hearing MIT roboticist Rodney Brooks speak at Waterloo

University on the advantages of basic sensorimotor, stimulus-response robotics

versus computationally complex mobile devices, Tilden completely abandoned the

project.

Til den left Brooks' lecture questioning if dependable

robots might be built without the use of computer processors or artificial

intelligence.

Rather than having the intelligence written into the robot's

programming, Til den hypothesized that the intelligence may arise from the

robot's operating environment, as well as the emergent features that resulted

from that world.

Tilden studied and developed a variety of unusual analog

robots at the Los Alamos National Laboratory in New Mexico, employing fast

prototyping and off-the-shelf and cannibalized components.

Los Alamos was looking for robots that could operate in

unstructured, unpredictable, and possibly hazardous conditions.

Tilden built almost a hundred robot prototypes.

His SATBOT autonomous spaceship prototype could align itself

with the Earth's magnetic field on its own.

He built fifty insectoid robots capable of creeping through

minefields and identifying explosive devices for the Marine Corps Base

Quantico.

A robot known as a "aggressive ashtray" spits

water at smokers.

A "solar spinner" was used to clean the windows.

The actions of an ant were reproduced by a biomorph made

from five broken Sony Walkmans.

Tilden started building Living Machines powered by solar cells at Los Alamos.

These machines ran at extremely sluggish rates due to their

energy source, but they were dependable and efficient for lengthy periods of

time, often more than a year.

Tilden's first robot designs were based on thermodynamic

conduit engines, namely tiny and efficient solar engines that could fire single

neurons.

Rather than the workings of their brains, his "nervous

net" neurons controlled the rhythms and patterns of motion in robot

bodies.

Tilden's idea was to maximize the amount of patterns

conceivable while using the fewest number of implanted transistors feasible.

He learned that with just twelve transistors, he could

create six different movement patterns.

Tilden might replicate hopping, leaping, running, sitting,

crawling, and a variety of other patterns of behavior by folding the six

patterns into a figure eight in a symmetrical robot chassis.

Since then, Tilden has been a proponent of a new set of

robot principles for such survivalist wild automata.

Tilden's Laws of Robotics say that (1) a robot must

safeguard its survival at all costs; (2) a robot must get and keep access to

its own power source; and (3) a robot must always seek out better power

sources.

Tilden thinks that wild robots will be used to rehabilitate ecosystems that have been harmed by humans.

Tilden had another breakthrough when he introduced very

inexpensive robots as toys for the general public and robot aficionados.

He wanted his robots to be in the hands of as many people as

possible, so that hackers, hobbyists, and members of different maker

communities could reprogramme and modify them.

Tilden designed the toys in such a way that they could be

dismantled and analyzed.

They might be hacked in a basic way.

Everything is color-coded and labeled, and all of the wires

have gold-plated contacts that can be ripped apart.

Tilden is presently working with WowWee Toys in Hong Kong on consumer-oriented entertainment robots:

- B.I.O. Bugs, Constructobots, G.I. Joe Hoverstrike, Robosapien, Roboraptor, Robopet, Roborep tile, Roboquad, Roboboa, Femisapien, and Joebot are all popular WowWee robot toys.

- The Roboquad was designed for the Jet Propulsion Laboratory's (JPL) Mars exploration program.

- Tilden is also the developer of the Roomscooper cleaning robot.

WowWee Toys sold almost three million of Tilden's robot

designs by 2005.

Tilden made his first robotic doll when he was three years

old.

At the age of six, he built a Meccano suit of armor for his

cat.

At the University of Waterloo, he majored in Systems

Engineering and Mathematics.

Tilden is presently working on OpenCog and OpenCog Prime alongside artificial intelligence pioneer Ben Goertzel.

OpenCog is a worldwide initiative supported by the Hong Kong

government that aims to develop an open-source emergent artificial general

intelligence framework as well as a common architecture for embodied robotic

and virtual cognition.

Dozens of IT businesses across the globe are already using

OpenCog components.

Tilden has worked on a variety of films and television

series as a technical adviser or robot designer, including Lara Croft: Tomb

Raider (2001), The 40-Year-Old Virgin (2005), Paul Blart Mall Cop (2009), and

X-Men: The Last Stand (2006).

In the Big Bang Theory (2007–2019), his robots are often

displayed on the bookshelves of Sheldon's apartment.

Find Jai on Twitter | LinkedIn | Instagram

You may also want to read more about Artificial Intelligence here.

See also:

Brooks, Rodney; Embodiment, AI and.

References And Further Reading

Frigo, Janette R., and Mark W. Tilden. 1995. “SATBOT I: Prototype of a Biomorphic Autonomous Spacecraft.” Mobile Robotics, 66–75.

Hapgood, Fred. 1994. “Chaotic Robots.” Wired, September 1, 1994. https://www.wired.com/1994/09/tilden/.

Hasslacher, Brosl, and Mark W. Tilden. 1995. “Living Machines.” Robotics and Autonomous Systems 15, no. 1–2: 143–69.

Marsh, Thomas. 2010. “The Evolution of a Roboticist: Mark Tilden.” Robot Magazine, December 7, 2010. http://www.botmag.com/the-evolution-of-a-roboticist-mark-tilden.

Menzel, Peter, and Faith D’Aluisio. 2000. “Biobots.” Discover Magazine, September 1, 2000. https://www.discovermagazine.com/technology/biobots.

Rietman, Edward A., Mark W. Tilden, and Manor Askenazi. 2003. “Analog Computation with Rings of Quasiperiodic Oscillators: The Microdynamics of Cognition in Living Machines.” Robotics and Autonomous Systems 45, no. 3–4: 249–63.

Samans, James. 2005. The Robosapiens Companion: Tips, Tricks, and Hacks. New York: Apress.